Another week, another headline declaring AI has officially surpassed physicians. This time, it's a study published in Science on April 30, 2026, claiming that OpenAI's o1 model "outperformed physician baselines" across multiple diagnostic reasoning tasks. The research comes from Harvard, Stanford, and Beth Israel Deaconess Medical Center. It's rigorous. It's peer-reviewed. And it's already being cited as proof that doctors are obsolete.

But here's what those viral headlines won't tell you: the study tested AI on text alone.

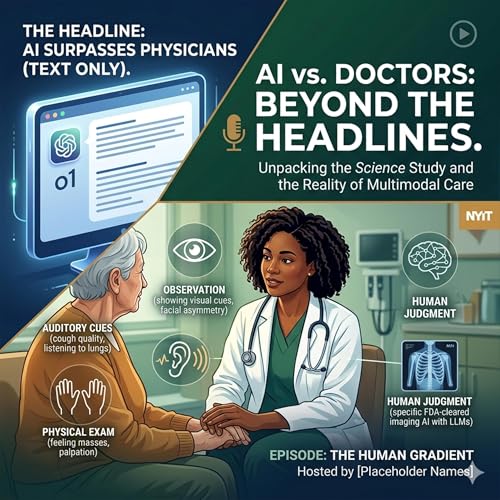

No images. No audio. No physical exams. No watching a patient walk through the door in distress before they utter a single word. No recognizing the subtle facial asymmetry that suggests stroke. No hearing the quality of a cough. No feeling a mass during examination. No interpreting the fear in a patient's eyes.

In other words—not real medicine.

In this episode, we unpack why this study, despite its methodological rigor, may be doing more harm than good. We explore the "headline-to-reality pipeline"—how clickbait economics strips away the authors' own caveats until all that remains is a misleading soundbite. We discuss the real-world consequences: misinformed patients with unrealistic expectations, demoralized clinicians, misallocated healthcare resources, and a generation of medical trainees learning exactly the wrong lessons about AI.

Perhaps most critically, we address the "chatbot conflation problem." When the public hears "AI in medicine," they picture ChatGPT. But as of late 2025, over 850 AI-enabled medical devices have received FDA clearance—more than 70% related to medical imaging. These task-specific systems detecting pulmonary nodules, identifying intracranial hemorrhages, and flagging diabetic retinopathy are fundamentally different from large language models answering text prompts. Different architecture. Different validation. Different regulatory pathways. Different levels of evidence. Lumping them together under "AI" does a disservice to both.

We also tackle a question the headlines never ask: What would a fair evaluation of AI in medicine actually look like? Hint—it would require multimodal inputs, messy real-world data, and a fundamentally different benchmark: not "Can AI beat doctors?" but "Do doctors WITH AI outperform doctors WITHOUT AI?"

Finally, we make the case for why medical education must lead this conversation. If we don't teach our students—and frankly, the broader public—the critical distinctions between AI tools, what happens? Clinicians lose trust not just in overhyped chatbots, but in all medical AI, including the FDA-cleared tools actually saving lives. That erosion of trust could take a generation to repair.

The technical findings of this study may be sound. But science doesn't exist in a vacuum. It exists in a media ecosystem that rewards sensationalism, in a healthcare system desperate for solutions, and in a culture increasingly willing to believe AI can do anything. The responsible approach is to be louder about limitations than findings.

Because right now, we're celebrating an AI that aced a written exam—while the actual test, the messy, multimodal, deeply human reality of clinical medicine, remains completely ungraded.

What You'll Learn: • Why text-based AI evaluations fundamentally misrepresent clinical medicine • The critical distinction between task-specific medical AI and general chatbots • How clickbait economics transforms nuanced research into dangerous misinformation • What fair AI evaluation in healthcare would actually require • Why medical educators must lead the conversation on AI literacy

Resources Mentioned: • Brodeur PG, et al. "Performance of a large language model on the reasoning tasks of a physician." Science. 2026;392(6797):524-527 • FDA AI-Enabled Medical Device Database • Clinical AI Course (NYIT College of Osteopathic Medicine)

8 mins

8 mins May 4 20268 mins

May 4 20268 mins Apr 14 202637 mins

Apr 14 202637 mins Apr 2 202623 mins

Apr 2 202623 mins Mar 18 20269 mins

Mar 18 20269 mins Mar 13 202618 mins

Mar 13 202618 mins Mar 9 202612 mins

Mar 9 202612 mins Nov 20 202517 mins

Nov 20 202517 mins